Product + platform case study

LoopPrep

AI-Assisted Frontend Interview Prep Platform

Company

LoopPrep (Personal Product)

Role

Full-Stack Engineer

Duration

Ongoing

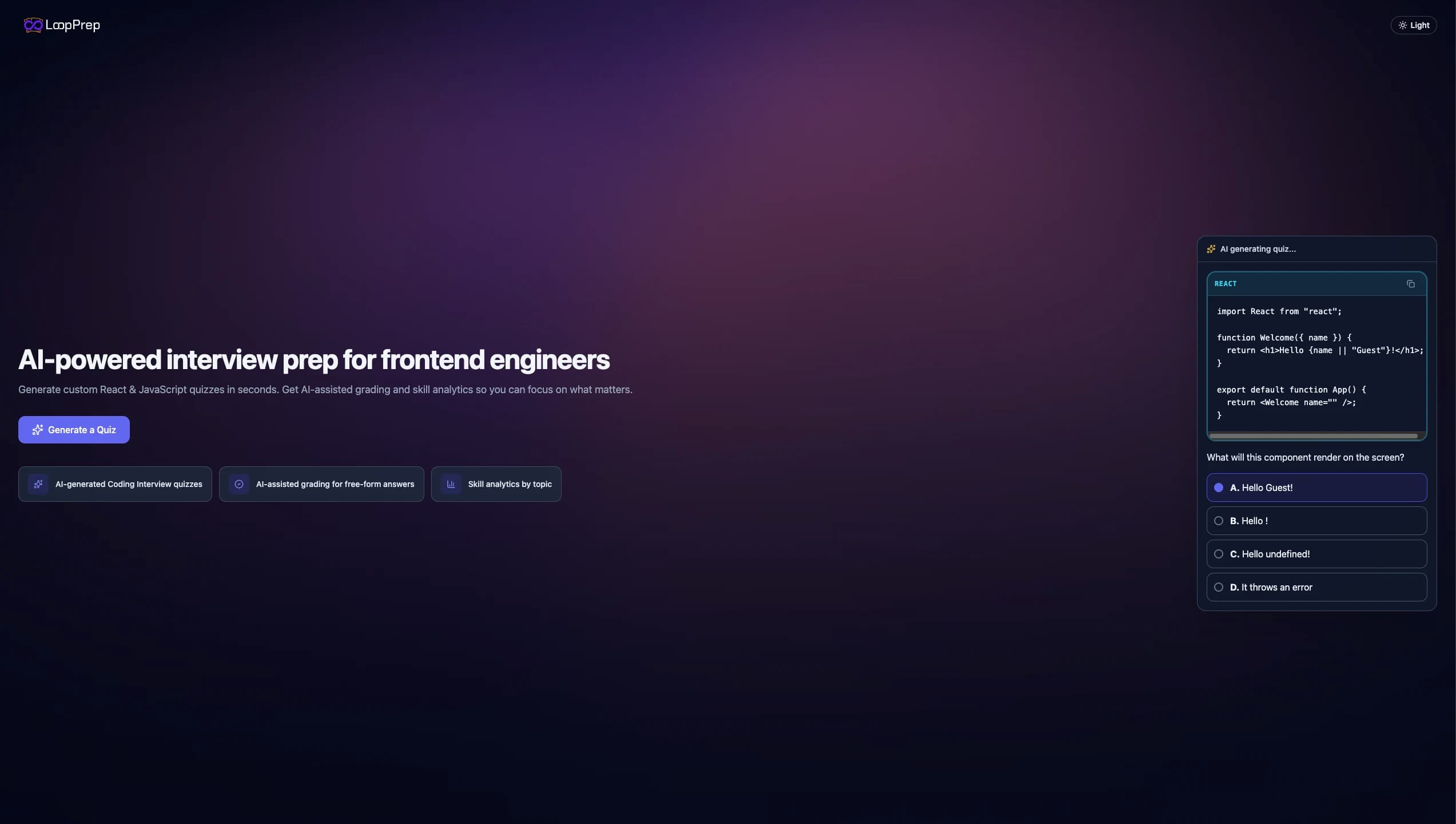

Built an interactive interview-prep quiz platform that helps frontend engineers practice in a structured loop: configure a quiz, run it question-by-question, then review results by topic. It’s AI-assisted, but reliability-first, meaning generation and grading remain predictable through strict schema validation, normalization, and deterministic fallback when AI is unavailable.

Context

From scattered prep to a structured interview loop

Frontend interview prep often feels fragmented: flashcards in one tab, random lists in another, and practice that doesn’t adapt to weaknesses. I wanted something closer to an actual interview: interactive, focused, and repeatable.

LoopPrep is built around a simple routine: generate a targeted quiz, complete it with momentum, review mistakes by topic, then iterate. A real preparation loop, not passive reading.

The engineering challenge showed up immediately: AI can create great content, but it can also fail (rate limits, missing keys, malformed outputs). An interview-prep tool must be trustworthy, so I designed LoopPrep to be AI-assisted but never AI-dependent, making predictable behavior a guarantee through strict contracts and fallback paths.

Problem

What a serious interview-prep platform must guarantee

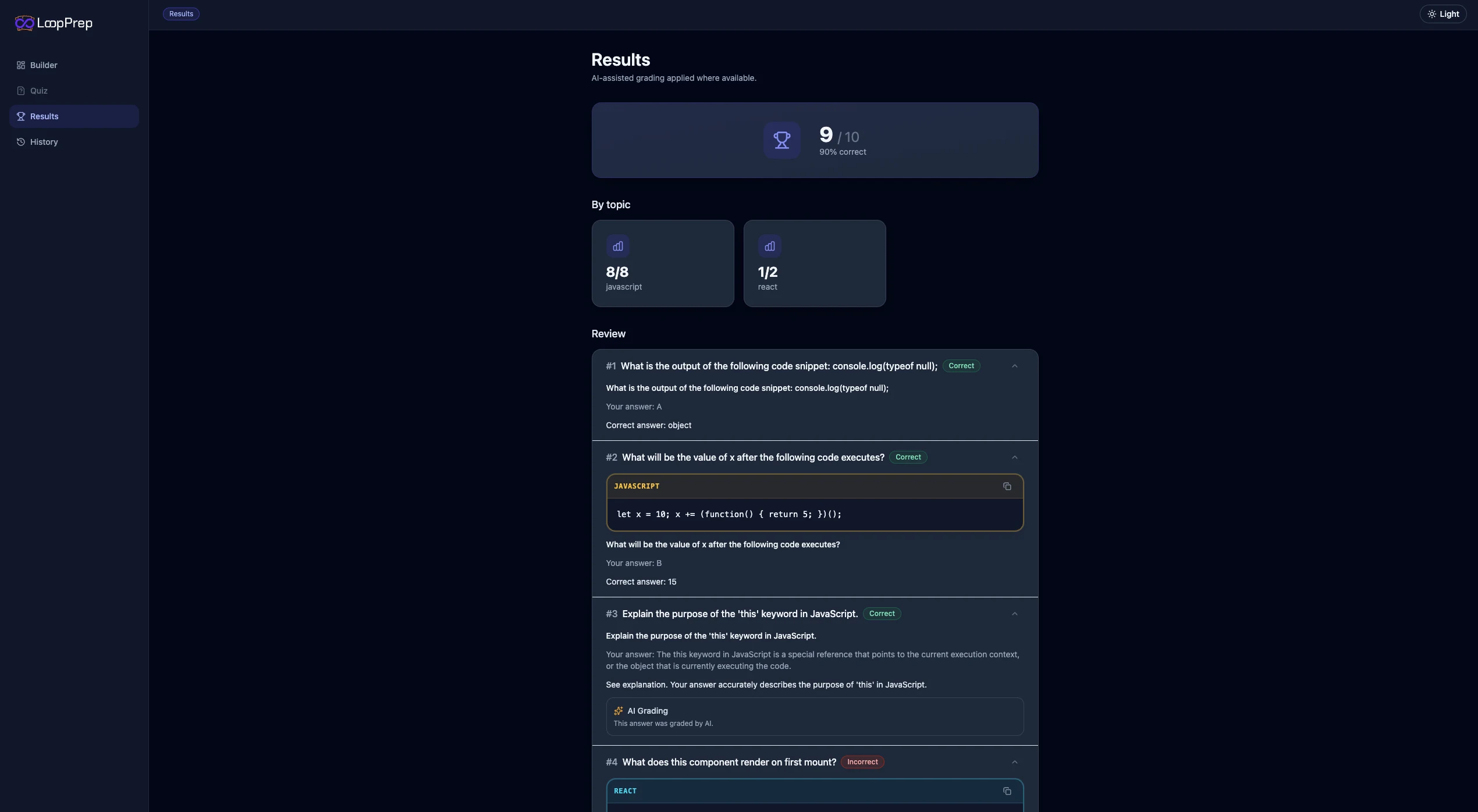

- Realistic practice: mixed question types (MCQ, code reading, short answers) with structured review and per-topic performance feedback.

- Reliability despite AI: generation and grading must still work when AI is unavailable or output is malformed.

- Strong domain contracts: shared schema-driven validation between frontend and backend to prevent integration drift.

- Safe session handling: users shouldn’t lose progress to refresh or accidental navigation during an active quiz.

- A free-tier experience that stays smooth, avoid random AI overuse with a lightweight unlock mechanism (without heavy authentication).

Contributions

Key full-stack engineering contributions

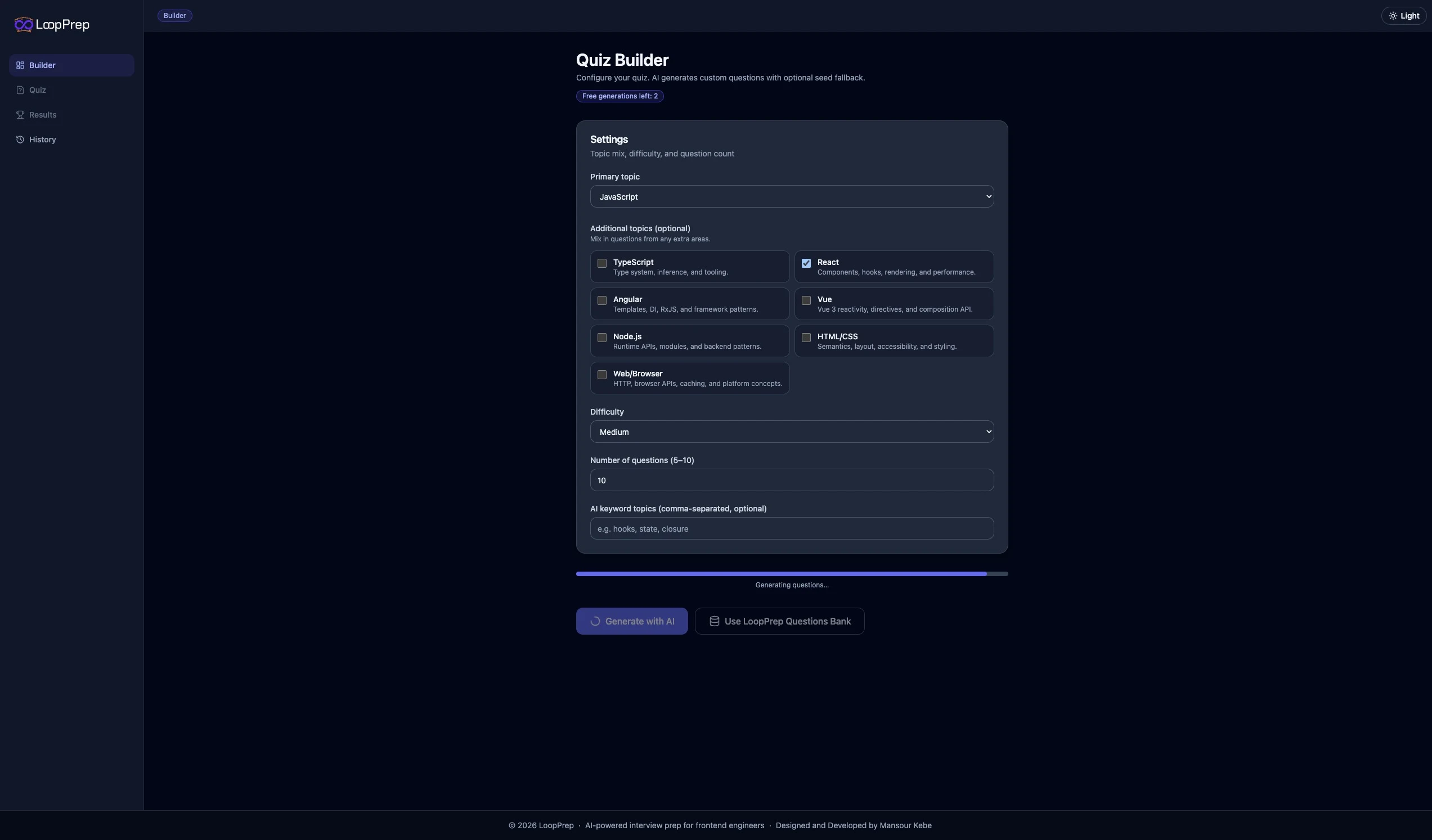

Reliability-First Generation & Contracts (API + Shared)

- Implemented shared schema-driven contracts in a monorepo “shared” package to keep frontend and backend aligned on quiz settings, question types, results, and validation rules.

- Built a defensive generation pipeline: parse → normalize/coerce → validate → dedupe → fill missing/invalid items → final validation, ensuring the UI never depends on malformed AI output.

- Implemented deterministic fallback generation from a curated seed bank, including difficulty widening when needed, shuffling, and source metadata tagging.

- Added a lightweight free-tier limiter for AI generation with an unlock-token flow for “unlimited mode” in demo/product settings.

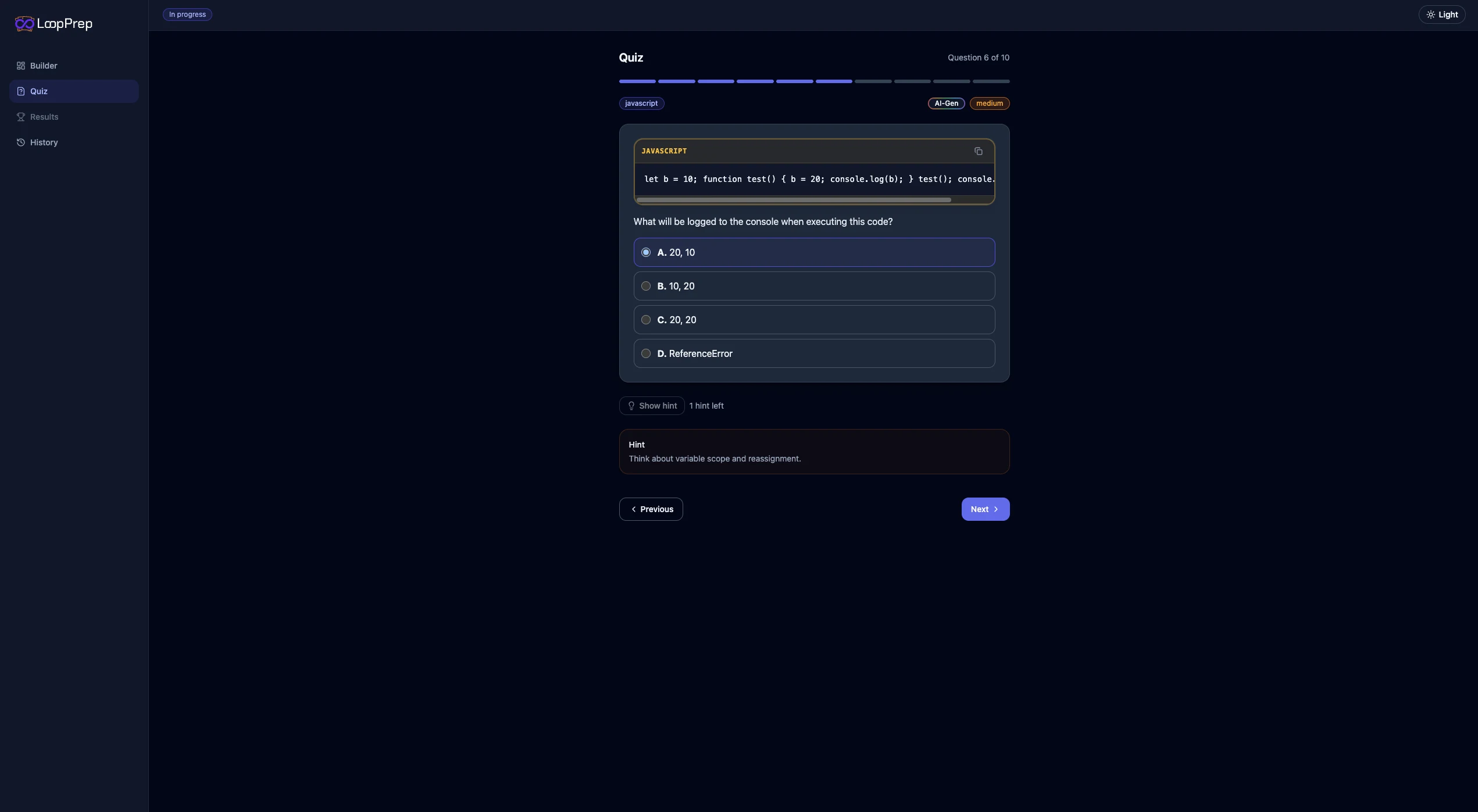

Quiz UX, Persistence & Flow Safety (Web)

- Built an end-to-end quiz flow (builder → runner → results) with progress indicators, answer validation gates, and structured review per question.

- Implemented local persistence with namespaced storage keys, schema versioning, updated timestamps, and TTL to keep sessions fast while avoiding stale state.

- Added session safety with a flow guard: intercept in-app navigation and browser unload events during active quizzes to prevent accidental progress loss.

- Implemented resilience safeguards that clear only quiz-related storage when server boot/schema metadata changes, avoiding corrupted state after server restarts or schema updates.

Architecture

High-level architecture

The React web app uses a thin client to request quiz generation and grading from an Express API. The backend validates all payloads using shared Zod schemas from the monorepo, then chooses between AI-assisted generation and a deterministic seed fallback.

On the frontend, the quiz flow is persisted locally with TTL and versioning, and guarded against accidental navigation so practice sessions feel stable and predictable.

Engineering approach

How it was engineered

The core philosophy is reliability-first AI. Instead of trusting model output directly, the system enforces strict schemas, normalization, and validation, then falls back deterministically whenever content is missing or invalid. This keeps product behavior consistent despite probabilistic generation.

I separated concerns across the monorepo: shared contracts as the source of truth, a thin web client, and a backend that owns generation/grading orchestration. This reduces coupling and makes iteration safer as features evolve.

Practical trade-offs were intentional for an early product: backend state is lightweight (in-memory) and “unlimited mode” uses a simple unlock-token mechanism. Deploy targets are straightforward: web on a static-friendly host and API on a server host, keeping the setup low-friction for iteration.

Impact

What LoopPrep delivers

- A structured interview-prep quiz experience: configure → practice → review → repeat, with topic-aware breakdowns that make weaknesses obvious.

- Trustworthy AI assistance: the app remains functional and predictable when AI fails, thanks to strict schema validation and deterministic seed fallback.

- “Session-safe” practice: progress is preserved with local persistence, TTL/versioning, and navigation guards that prevent accidental loss during active quizzes.

- Strong full-stack alignment: shared domain contracts keep frontend and backend synchronized as the platform evolves.

- Product-ready mechanics: controlled free-tier AI usage with a lightweight unlock flow suitable for demo monetization.